Multiple R-squared: 0.07649, Adjusted R-squared: 0.02519į-statistic: 1.491 on 1 and 18 DF, p-value: 0.2378Ĭreating the scatterplot with R-squared value on the plot − plot(x,y)Ībline(Model) legend("topleft",legend=paste("R2 is", format(summary(Model)$r. Residual standard error: 0.04689 on 18 degrees of freedom

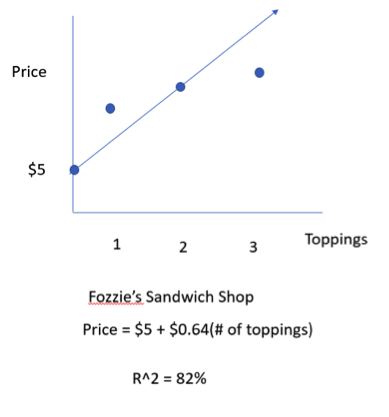

codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1 Quick, what’s 25 squared If that’s too easy for you, how about something tougher like 37 squared Or 111 squared Want to learn an easy way to calculate these squares and the square of any other. To display this value on the scatterplot with regression model line without taking help from any package, we can use plot function with abline and legend functions. R-squared 1 (First Sum of Errors / Second Sum of Errors) Keep in mind that this is the very last step in calculating the r-squared for a set of. Here’s what the r-squared equation looks like. The R-squared value is the coefficient of determination, it gives us the percentage or proportion of variation in dependent variable explained by the independent variable. The R-squared formula is calculated by dividing the sum of the first errors by the sum of the second errors and subtracting the derivation from 1. In R 2, y ( y i y i ), 1 & 2, 3 ( y i y n of squares) y i y i In a sum-square regression, residuals are squared, while the distance between the total distance away from a means and it takes the mean to calculate these amounts.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed